Prompt Token Counter

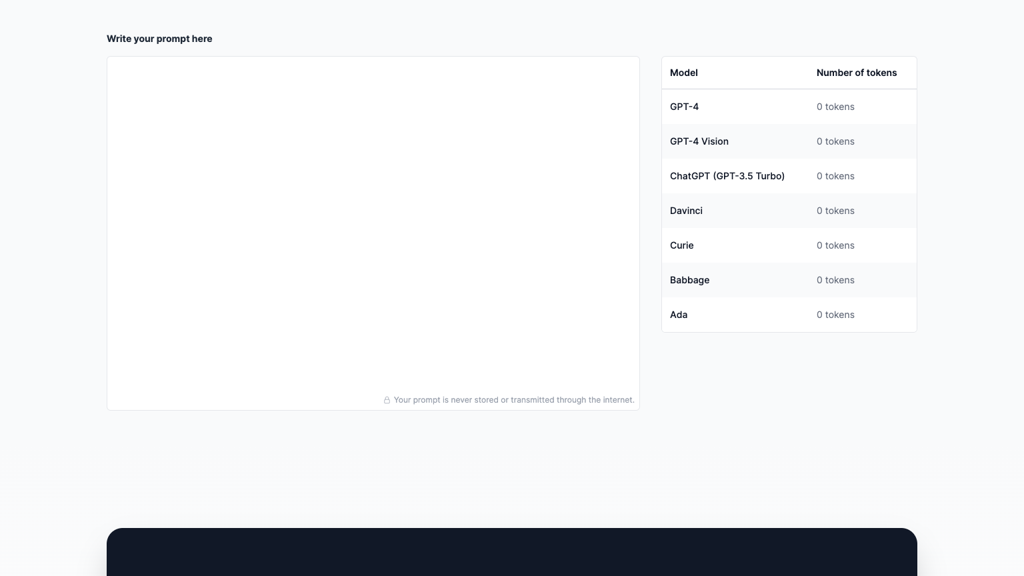

Prompt Token Counter is an AI tool that calculates token counts for prompts to help optimize costs and context limits.

What is Prompt Token Counter

Prompt Token Counter is a utility tool for AI users that calculates the token count of text for various AI models. Understanding token counts is essential for optimizing API costs and staying within context limits when working with language models. The tool accurately counts tokens using the same tokenization methods as popular AI models including GPT, Claude, and others.

Features include multi-model support, accurate counting, cost estimation, character comparison, batch processing, and API access. The platform helps developers, content creators, and AI users optimize their prompts and predict costs. Prompt Token Counter is an essential utility for anyone working seriously with AI language models.

How to use Prompt Token Counter

- 1 Paste your text or prompt

- 2 Select the AI model for accurate tokenization

- 3 View token count and cost estimate

- 4 Optimize prompts based on results

Primary Features

Applications & Use Cases

- Cost optimization

- Context management

- Prompt optimization

- API budgeting

- Development testing

- Content planning

Pricing

Free to use.Category